AI needs electricity like a city needs water. The problem is, the pipes are running out.

The International Energy Agency projects that global data centre electricity demand will hit over 1,000 terawatt-hours by 2030. That is more than Japan consumes in a year. Tech companies are spending over $400 billion a year on data centre infrastructure, and the bottleneck is no longer money or chips. It is power. Grids are maxed out. Substations take years to build. Communities do not want 500-megawatt campuses in their backyard.

So what do you do when land-based infrastructure cannot keep up? One Oregon startup thinks the answer is to put AI data centres in the ocean.

Meet Panthalassa

Panthalassa just raised $140 million in a Series B led by Peter Thiel. The company builds autonomous floating data centres that generate their own power from wave energy, operate without a grid connection, and run AI workloads in the middle of the ocean.

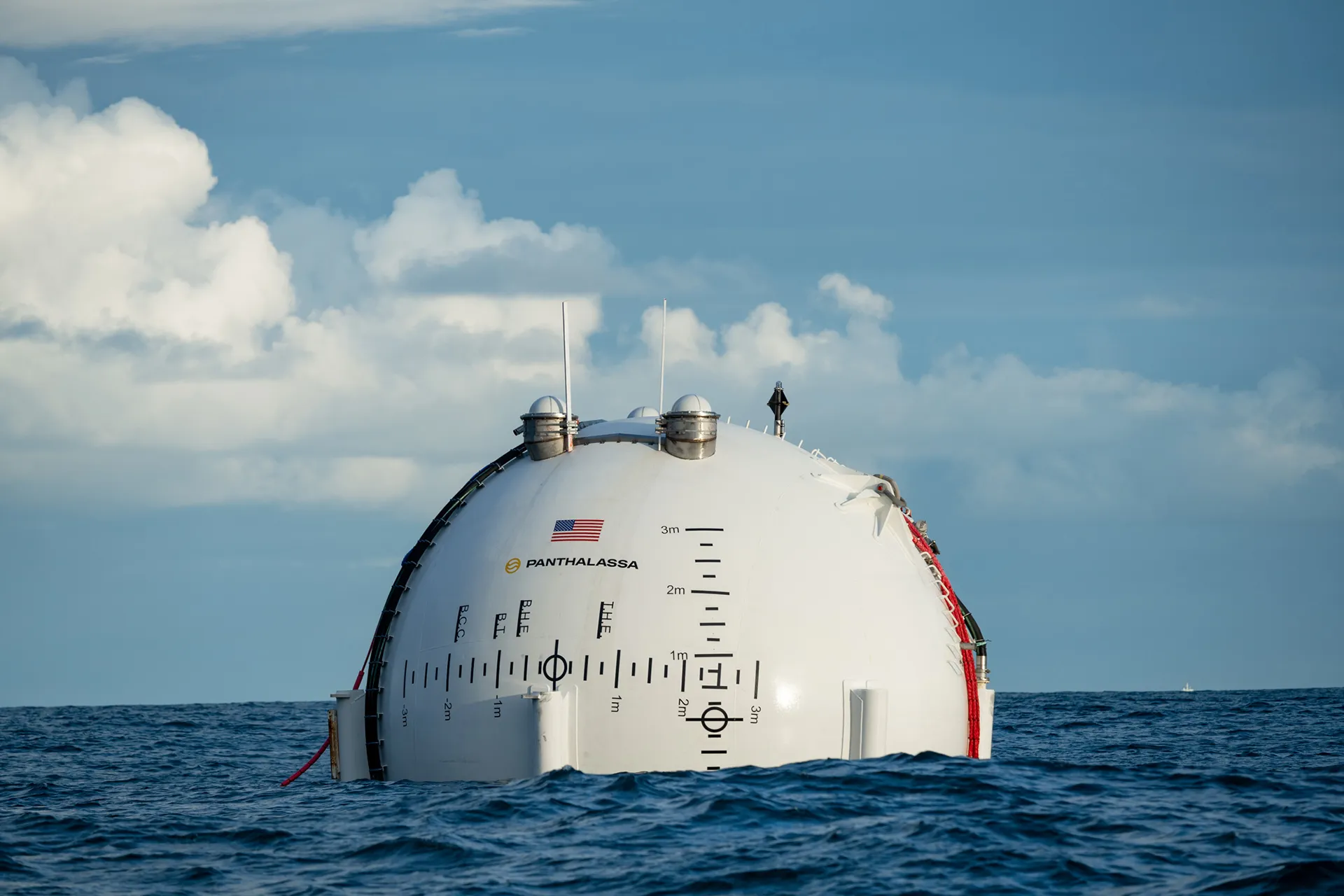

The design looks like a giant golf ball sitting on a tee. The whole thing stands 85 metres tall, roughly the height of Big Ben, and is made of plate steel. It gets towed out to sea by a boat, then self-propels to its designated location.

Here is how it works. The "tee" contains a long tube that is open at the bottom. As ocean waves lift and drop the structure, sea water pushes through the tube and into the hollow "ball" on top. The moving water spins turbines that generate electricity, which powers onboard GPUs, computing hardware, and satellite communications equipment.

Cooling is handled by the ocean itself. The servers sit in sealed modules below the waterline, and the container wall acts as a heat exchanger. Heat dissipates directly into the surrounding cold water. No massive cooling towers. No freshwater consumption. Just the ocean doing what it does.

Why This Is Interesting

The pitch is not "we built a better data centre." The pitch is "we built compute where land, water, and power are hardest to get."

That distinction matters. A lot of AI infrastructure expansion is being blocked not because companies lack capital, but because local grids are constrained. Power interconnection queues are years long. Substations and transmission lines take ages to permit and build. Communities increasingly resist giant onshore data centre campuses.

An offshore platform that generates its own renewable power and cools itself for free bypasses that entire bottleneck. And because these are modular, you could theoretically manufacture them in a shipyard and deploy them wherever ocean conditions are right.

But the Ocean Is Not Kind to Machines

Jonathan Koomey, a former researcher at Lawrence Berkeley National Laboratory and an expert on data centre energy, put it simply: "Wave power is an old technology and it can work, but the ocean is a harsh environment. The salt and the waves are effective at causing trouble for machinery."

Salt corrodes metal. Storms batter structures. Biofouling clogs surfaces. And when something breaks 200 kilometres offshore, you cannot just send a technician in a van.

Jacqueline Davis at the Uptime Institute, the global authority on data centre performance, points out that even highly automated onshore data centres still frequently need human physical intervention. "Our data consistently names power and networking as the top two root causes of data centre outages," Davis says. "These can each be uniquely difficult to manage in a remote environment with little to no staff."

Manual restarts of cooling compressors, hardware swaps, network troubleshooting. These things are common in normal data centres. Doing them on a floating platform in the middle of the Pacific is a different game entirely.

The Latency Problem

There is another big constraint: connectivity. Panthalassa plans to transmit data via Starlink satellites. That works for certain AI workloads, specifically batch jobs that can run for hours or days and then return results, like training large models or running scientific simulations.

But most consumer AI applications, chatbots, search assistants, real-time tools, need low latency and constant network communication. Satellite links cannot match fibre optic cables on either metric. So floating data centres are, for now, limited to a specific class of AI work.

As Davis notes, Panthalassa's approach will become more viable "if the total power needs of running trained AIs grow enough to rival those of AI training." Until then, the consumer-facing AI workloads that drive most demand will stay on land.

They Are Not Alone

Panthalassa is not the only company thinking about this. Aikido Technologies is building floating data centres integrated into offshore wind platforms, with each unit capable of powering 10+ megawatts of compute. They have already been selected for an NVIDIA programme and identified over 50 gigawatts of distressed floating wind sites globally that could be repurposed for sovereign data centre deployment.

Mitsui O.S.K. Lines, the Japanese shipping giant, is studying ship-based computing systems powered by marine energy. And Microsoft already tested the concept with Project Natick, which sank a shipping-container-sized data centre off Scotland's Orkney Islands in 2018. When they pulled it up two years later, the underwater servers had a failure rate one-eighth that of comparable land-based servers. Turns out, removing oxygen, humidity, and human interference is really good for hardware reliability.

But Microsoft ultimately shelved Project Natick. The economics and operational model did not scale the way they needed it to.

The Real Question

Koomey frames it well: "There are economies of scale to building data centres, which is why they are getting so large nowadays. They build big ones to spread fixed costs over more compute. It is a lot harder and more risky to build big compute installations on the water than on land."

That is the core tension. Land-based data centres benefit from decades of operational learning, established supply chains, grid connections, fibre networks, and human-accessible maintenance. Floating platforms have to replicate all of that from scratch, in a hostile environment, at competitive cost.

Can they do it? Maybe. For specific workloads, in specific geographies, where grid access is genuinely impossible. But as a broad replacement for the hyperscale campuses that power most of the world's AI? Not anytime soon.

The Honest Take

Floating data centres are a fascinating pressure valve for an infrastructure system that is genuinely running out of room. The energy math is real. The grid constraints are real. The cooling advantages are real.

But the maintenance risk is real too. So is the latency limitation. So is the economic gap between building on stable ground with fibre backbones and building on moving water with satellite links.

Panthalassa is not solving AI's energy crisis. But it might be building the first serious workaround for the places where the crisis hits hardest.

And sometimes, a good workaround is worth $140 million.

New Scientist — Can floating data centres meet AI's huge energy demand?